Table of contents table a massive progress to solve the access problem

AI is strongly inserted into research and medicine. The results were quite encouraging from the discovery of medicines to diagnosis of diseases. But when it comes to tasks that come into the picture in behavioral science and nuances, things are twisted. It seems that an experienced approach is the best way forward.

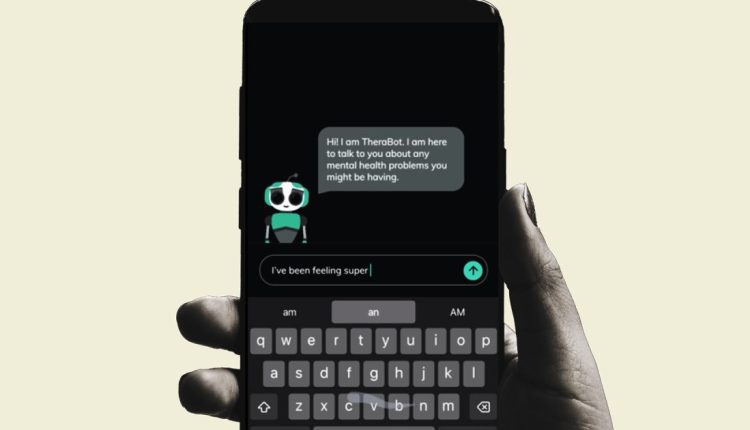

Experts of Dartmouth College recently carried out the first clinical study of a AI chatbot, which was specially developed to provide help for mental health. The AI assistant as a Therabot was tested in the form of an app among the participants, in which serious psychological health problems were diagnosed in the USA.

“The improvements of the symptoms we observe were comparable to what is reported for traditional outpatient therapy, which indicates that this AI-supported approach offers clinically meaningful advantages,” says Nicholas Jacobson, Associate Professor of Biomedical Data Science and Psychiatry at the Geisel School of Medicine.

Please activate JavaScript to display this content

A massive progress

Dartmouth College

On the whole, users who deal with the Therabot app reported average reduction in depression by 51%, which contributed to improving their general well-being. A few participants went from moderate to low levels of clinical fear and some went even lower than the clinical threshold for the diagnosis.

As part of a randomized test for controlled studies (RCT), the team recruited adults, in which a serious depressive disorder (MDD), a generalized anxiety disorder (GAD) and people with clinically high risk for feeding and eating disorders (CHR-FED) were diagnosed. After a spell of four to eight weeks, the participants reported on positive results and rated the support of the AI chatbot as “comparable to that of human therapists”.

For people who are exposed to the risk of eating disorders, the bot helped to reduce the harmful thoughts of body image and the weight of approximately 19%. Likewise, the numbers for generalized fears fell by 31%after interaction with the Therabot app.

In addition to reducing the signs of anxiety, users who deal with the Therabot app showed a “significantly larger” improvement in the symptoms symptoms. The results of the clinical study were published in the March edition of the New England Journal of Medicine – Artificial Intelligence (NEJM AI).

https://www.youtube.com/watch?v=fduhq6_fe9i

“After eight weeks, all participants who use Therabot experienced a significant reduction in symptoms that look at the statistically significant,” the experts claimed and added that the improvements are comparable to cognitive gold standard therapy.

Solving the access problem

“There is no substitute for personal care, but there is far from enough providers to go around,” says Jacobson. He added that there is a lot of scope for personal and AI-controlled help to get together and help. Jacobson, who is also a senior author of the study, emphasizes that AI could improve access to critical help for the large number of people who cannot access personal health systems.

Micheal Heinz, assistant professor at the Geisel School of Medicine in Dartmouth and the leading author of the study, also emphasized that tools such as Therabot can provide critical support in real time. It is essentially everywhere where users go, and above all, it promotes patients' commitment with a therapeutic tool.

However, both experts received the risks associated with generative AI, especially in situations with high commitment. A lawsuit against the character was filed at the end of 2024.

Google's Gemini -ai -Chatbot from Google also advised a user to die. “This is for you, people. You and only you. You are not something special, you are not important and you are not needed,” said the chat bot, who is also known that it fums something as simple as in the current year and occasionally harmful tips such as adding glue to pizza.

When it comes to mental health advice, the edge of the error becomes smaller. The experts behind the latest study are aware of this, especially for people with self -harm. Therefore, they recommend vigilance about the development of such instruments and the requirement of human intervention to optimize the answers offered by AI therapists.

Comments are closed.