Moritz Büsing

In a previous article on WUWT I described how I found and corrected an error in the way weather station data is processed in order to calculate the temperature anomalies of the past 140 years.

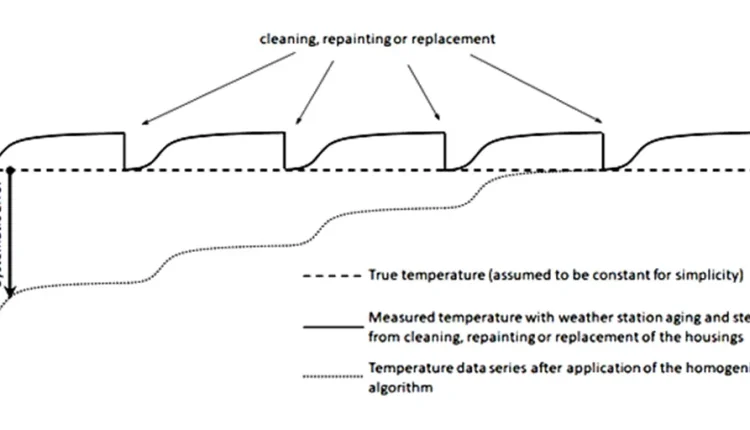

The error was that warming of the weather station housings due to ageing of the paint by 0.1°C to 0.2°C (0.18°F to 0.36°F) was compounded multiple times by the so-called homogenization algorithms used by NOAA and other organizations. This happens, because the homogenization algorithm assumes a permanent change in temperature when the station housing is repainted, replaced, or even cleaned. But these changes are temporary, because the new paint starts ageing and accumulating dirt again.

In this first investigation I analyzed two sets of data provided by NOAA: The temperature data from thousands of weather stations around the world before and after homogenization. I determined how much the weather stations warmed on average after each homogenization step. Then I removed this warming from ageing.

The result was a reduction of the temperature change between the decades 1880-1890 and 2010-2020 from 1.43°C to 0.83°C CI(95%) [0.46°C; 1.19°C]. I wrote a paper on this analysis, in which I describe the methods in detail:

https://osf.io/preprints/osf/huxge

One might question, if the methods that I used were the right ones, and if I applied them correctly. Therefore, I tried second simpler analysis:

I compared three simple analysis results:

- The temperature anomaly by simply averaging all weather station anomalies after homogenization. (Just as a reference; averaging is dubious in the best of cases, but having non-area weighted average is even more dubious)

- The temperature anomaly by simply averaging all weather station anomalies before homogenization.

- The temperature anomaly by simply averaging all weather station anomalies, but removing the data from those years, where the ageing has the largest effect. The data from the years 13 to 30 after each homogenization step remains.

By simply deleting the data that may be affected by the homogenization and that is probably most affected by the ageing of the weather stations, I avoid making any methodological or statistical assumptions that might create a bias in the analysis.

This simple average of the anomalies shows a larger warming trend than the area-averaged data from GISTEMP:

- Full data set homogenized: 1.94°C warming (3.49°F).

- Full data set non-homogenized: 1.67°C warming (3.01 °F).

- Data from years 13-30: 1.43°C warming (2.57°F).

The anomaly from the years 13-30 after each homogenization step shows 0.51°C (0.92°F) less warming than the homogenized full data set.

However, the ageing during the interval between 13 years and 30 years remains as an error. Furthermore, what I described as “self-harmonization” in my paper remains in the data set. Because of these problems with my second analysis approach, I tried a third analysis approach:

I considered that analyzing anomalies “bakes in” any trend error due to ageing or any other cause. One should rather use absolute temperatures, because the thermometers are precision instruments that are calibrated on a regular basis. However simply averaging the absolute worldwide temperature measurements would introduce a new bias: The changes in numbers and distributions of weather station locations around the world.

First most weather stations were located in Europe and Northern America, which are comparatively cool and moderate regions. Then many more weather stations were introduced in the rest of the world, especially in warmer countries in the beginning of the 20th century. The numbers increased in the comparatively cold Soviet Union and its allies in the middle of the 20th century. Towards the end of the 20th century the numbers of weather stations in Northern America and western Europe increased, but the numbers in the former Soviet Union and its allies decreased drastically. All these non-climate related trends have a large impact on the averaging of the absolute weather station data. I tried a few variations in averaging, and got massively different results:

These huge variations in the temperature trends due to small changes in the way the data is averaged is quite suspicious. Therefore, I tried to eliminate the effect of different trends in weather station densities in different regions, by averaging the absolute temperatures and the temperature anomalies in each region and comparing the results. Luckily the weather station data is tagged by a letter code for the countries in which they are located.

I calculated the absolute temperatures and temperature anomalies for the following 29 countries, which were selected for having the most complete data sets for the past 140 years:

Netherlands, Portugal, South Korea, New Zealand, South Africa, Uruguay, Uzbekistan, USA, Iceland, Germany, China, Brazil, Egypt, Turkey, India, Australia, United Kingdom, France, Spain, Italy, Austria, Ireland, Hungary, Japan, Morocco, Poland, Sweden, Tunesia, Ukraine.

Then I calculated the difference between the temperature anomaly and the absolute temperature of each country. Finally, I calculated the trends of these differences:

| Difference in trends per year | |

| Netherlands | 0.0064 |

| Portugal | 0.0168 |

| South Korea | 0.0062 |

| New Zealand | -0.0064 |

| South Africa | 0.0042 |

| Uruguay | 0.0030 |

| Uzbekistan | 0.0144 |

| USA | 0.0137 |

| Iceland | 0.0242 |

| Germany | 0.0087 |

| China | 0.0477 |

| Brazil | -0.0064 |

| Egypt | -0.0023 |

| Turkey | 0.0137 |

| India | -0.0051 |

| Australia | 0.0035 |

| United Kingdom | 0.0007 |

| France | 0.0122 |

| Spain | -0.0011 |

| Italy | -0.0064 |

| Austria | -0.0047 |

| Ireland | 0.0109 |

| Hungary | 0.0015 |

| Japan | -0.0042 |

| Morocco | -0.0036 |

| Poland | -0.0107 |

| Sweden | 0.0055 |

| Tunesia | 0.0048 |

| Ukraine | 0.0174 |

I analyzed this data statistically:

- Lower bound 95% confidence interval: 0.00137°C/a

- Mean: 0.00568°C/a

- Upper bound 95% confidence interval: 0.00999°C/a

For 140 years this leads to the following differences between the warming trends of the absolute temperatures and the temperature anomalies:

- Lower bound 95% confidence interval: 0.19°C

- Mean: 0.80°C

- Upper bound 95% confidence interval: 1.41°C

This means that analyzing the anomalies overestimates the warming by a statistically significant amount.

This analysis still includes a bias for the trends in weather station locations within each country, but there is no reason to assume, that all of these 29 countries have the same bias.

In conclusion, all three analysis approaches had similar results that point towards substantially less global warming within the last 140 years than previously thought.

Like this:

Loading…

Comments are closed.