Deep learning has come a long way since the days it could only recognize hand-written characters on checks and envelopes. Today, deep neural networks have become a key component of many computer vision applications, from photo and video editors to medical software and self-driving cars.

Roughly fashioned after the structure of the brain, neural networks have come closer to seeing the world as we humans do. But they still have a long way to go and make mistakes in situations that humans would never err.

These situations, generally known as adversarial examples, change the behavior of an AI model in befuddling ways. Adversarial machine learning is one of the greatest challenges of current artificial intelligence systems. They can lead machine learning models failing in unpredictable ways or becoming vulnerable to cyberattacks.

Adversarial example: Adding an imperceptible layer of noise to this panda picture causes a convolutional neural network to mistake it for a gibbon.

Creating AI systems that are resilient against adversarial attacks has become an active area of research and a hot topic of discussion at AI conferences. In computer vision, one interesting method to protect deep learning systems against adversarial attacks is to apply findings in neuroscience to close the gap between neural networks and the mammalian vision system.

Using this approach, researchers at MIT and MIT-IBM Watson AI Lab have found that directly mapping the features of the mammalian visual cortex onto deep neural networks creates AI systems that are more predictable in their behavior and more robust to adversarial perturbations. In a paper published on the bioRxiv preprint server, the researchers introduce VOneNet, an architecture that combines current deep learning techniques with neuroscience-inspired neural networks.

The work, done with help from scientists at the University of Munich, Ludwig Maximilian University, and the University of Augsburg, was accepted at the NeurIPS 2020, one of the prominent annual AI conferences, which will be held virtually this year.

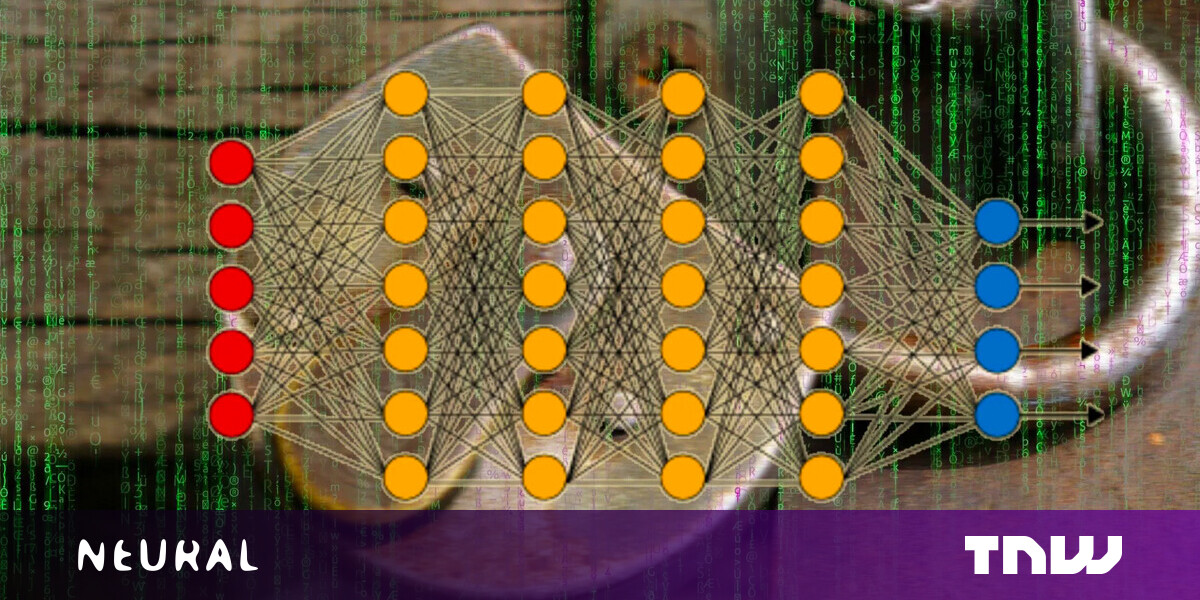

Convolutional neural networks

The main architecture used in computer vision today is convolutional neural networks (CNN). When stacked on top of each other, multiple convolutional layers can be trained to learn and extract hierarchical features from images. Lower layers find general patterns such as corners and edges, and higher layers gradually become adept at finding more specific things such as objects and people.

Each layer of the neural network will extract specific features from the input image.

Each layer of the neural network will extract specific features from the input image.

In comparison to the traditional fully connected networks, ConvNets have proven to be both more robust and computationally efficient. There remain, however, fundamental differences between the way CNNs and the human visual system process information.

“Deep neural networks (and convolutional neural networks in particular) have emerged as surprising good models of the visual cortex—surprisingly, they tend to fit experimental data collected from the brain even better than computational models that were tailor-made for explaining the neuroscience data,” David Cox, IBM Director of MIT-IBM Watson AI Lab, told TechTalks. “But not every deep neural network matches the brain data equally well, and there are some persistent gaps where the brain and the DNNs differ.”

The most prominent of these gaps are adversarial examples, in which subtle perturbations such as a small patch or a layer of imperceptible noise can cause neural networks to misclassify their inputs. These changes go mostly unnoticed to the human eye.

AI researchers discovered that by adding small black and white stickers to stop signs, they could make them invisible to computer vision algorithms (Source: arxiv.org)

AI researchers discovered that by adding small black and white stickers to stop signs, they could make them invisible to computer vision algorithms (Source: arxiv.org)

“It is certainly the case that the images that fool DNNs would never fool our own visual systems,” Cox says. “It’s also the case that DNNs are surprisingly brittle against natural degradations (e.g., adding noise) to images, so robustness in general seems to be an open problem for DNNs. With this in mind, we felt this was a good place to look for differences between brains and DNNs that might be helpful.”

Cox has been exploring the intersection of neuroscience and artificial intelligence since the early 2000s, when he was a student of James DiCarlo, neuroscience professor at MIT. The two have continued to work together since.

“The brain is an incredibly powerful and effective information processing machine, and it’s tantalizing to ask if we can learn new tricks from it that can be used for practical purposes. At the same time, we can use what we know about artificial systems to provide guiding theories and hypotheses that can suggest experiments to help us understand the brain,” Cox says.

Brain-like neural networks

For the new research, Cox and DiCarlo joined Joel Dapello and Tiago Marques, the lead authors of the paper, to see if neural networks became more robust to adversarial attacks when their activations were similar to brain activity. The AI researchers tested several popular CNN architectures trained on the ImageNet data set, including AlexNet, VGG, and different variations of ResNet. They also included some deep learning models that had undergone “adversarial training,” a process in which a neural network is trained on adversarial examples to avoid misclassifying them.

The scientist evaluated the AI models using the “BrainScore” metric, which compares activations in deep neural networks and neural responses in the brain. They then measured the robustness of each model by testing it against white-box adversarial attacks, where an attacker has full knowledge of the structure and parameters of the target neural networks.

“To our surprise, the more brain-like a model was, the more robust the system was against adversarial attacks,” Cox says. “Inspired by this, we asked if it was possible to improve robustness (including adversarial robustness) by adding a more faithful simulation of the early visual cortex—based on neuroscience experiments—to the input stage of the network.”

Research shows that neural networks with higher BrainScores are more robust to white-box adversarial attacks.

Research shows that neural networks with higher BrainScores are more robust to white-box adversarial attacks.

VOneNet and VOneBlock

To further validate their findings, the researchers developed VOneNet, a hybrid deep learning architecture that combines standard CNNs with a layer of neuroscience-inspired neural networks.

The VOneNet replaces the first few layers of the CNN with the VOneBlock, a neural network architecture fashioned after the primary visual cortex of primates, also known as the V1 area. This means that image data is first processed by the VOneBlock before being passed on to the rest of the network.

The VOneBlock is itself composed of a Gabor filter bank (GFB), simple and complex cell nonlinearities, and neuronal stochasticity. The GFB is similar to the convolutional layers found in other neural networks. But while classic neural networks with random parameter values and tune them during training, the values of the GFB parameters are determined and fixed based on what we know about activations in the primary visual cortex.

The VOneBlock is a neural network architecture that mimics the functions of the primary visual cortex

The VOneBlock is a neural network architecture that mimics the functions of the primary visual cortex

“The weights of the GFB and other architectural choices of the VOneBlock are engineered according to biology. This means that all the choices we made for the VOneBlock were constrained by neurophysiology. In other words, we designed the VOneBlock to mimic as much as possible the primate primary visual cortex (area V1). We considered available data collected over the last four decades from several studies to determine the VOneBlock parameters,” says Tiago Marques, PhD, PhRMA Foundation Postdoctoral Fellow at MIT and co-author of the paper.

While there are significant differences in the visual cortex of different primate, there are also many shared features, especially in the V1 area. “Fortunately, across primates differences seem to be minor, and in fact, there are plenty of studies showing that monkeys’ object recognition capabilities resemble those of humans. In our model with used published available data characterizing responses of monkeys’ V1 neurons. While our model is still only an approximation of primate V1 (it does not include all known data and even that data is somewhat limited – there is a lot that we still do not know about V1 processing), it is a good approximation,” Marques says.

Beyond the GFB layer, the simple and complex cells in the VOneBlock give the neural network flexibility to detect features under different conditions. “Ultimately, the goal of object recognition is to identify the existence of objects independently of their exact shape, size, location, and other low-level features,” Marques says. “In the VOneBlock it seems that both simple and complex cells serve complementary roles in supporting performance under different image perturbations. Simple cells were particularly important for dealing with common corruptions while complex cells with white box adversarial attacks.”

VOneNet in action

One of the strengths of the VOneBlock is its compatibility with current CNN architectures. “The VOneBlock was designed to have a plug-and-play functionality,” Marques says. “That means that it directly replaces the input layer of a standard CNN structure. A transition layer that follows the core of the VOneBlock ensures that its output can be made compatible with rest of the CNN architecture.”

The researchers plugged the VOneBlock into several CNN architectures that perform well on the ImageNet data set. Interestingly, the addition of this simple block resulted in considerable improvement in robustness to white-box adversarial attacks and outperformed training-based defense methods.

“Simulating the image processing of primate primary visual cortex at the front of standard CNN architectures significantly improves their robustness to image perturbations, even bringing them to outperform state-of-the-art defense methods,” the researchers write in their paper.

Experiments show that convolutional neural networks that have been modified to include the VOneBlock are more resilient against white-box adversarial attacks.

Experiments show that convolutional neural networks that have been modified to include the VOneBlock are more resilient against white-box adversarial attacks.

“The model of V1 that we added here is actually quite simple—we’re only altering the first stage of the system, while leaving the rest of the network untouched, and the biological fidelity of this V1 model is still quite simple,” Cox says, adding that there is a lot more detail and nuance one could add to such a model to make it better match what is known about the brain.

“Simplicity is strength in some ways, since it isolates a smaller set of principles that might be important, but it would be interesting to explore whether other dimensions of biological fidelity might be important,” he says.

The paper challenges a trend that has become all too common in AI research in the past years. Instead of applying the latest findings of brain mechanisms in their research, many AI scientists focus on driving advances in the field by taking advantage of the availability of vast computing resources and large data sets to train larger and larger neural networks. And as we’ve discussed in these pages before, that approach presents many challenges to AI research.

VOneNet proves that biological intelligence still has a lot of untapped potential and can address some of the fundamental problems AI research is facing. “The models presented here, drawn directly from primate neurobiology, indeed require less training to achieve more human-like behavior. This is one turn of a new virtuous circle, wherein neuroscience and artificial intelligence each feed into and reinforce the understanding and ability of the other,” the authors write.

In the future, the researchers will further explore the properties of VOneNet and the further integration of discoveries in neuroscience and artificial intelligence. “One limitation of our current work is that while we have shown that adding a V1 block leads to improvements, we don’t have a great handle on why it does,” Cox says.

Developing the theory to help understand this “why” question will enable the AI researchers to ultimately home in on what really matters and to build more effective systems. They also plan to explore the integration of neuroscience-inspired architectures beyond the initial layers of artificial neural networks.

Says Cox, “We’ve only just scratched the surface in terms of incorporating these elements of biological realism into DNNs, and there’s a lot more we can still do. We’re excited to see where this journey takes us.”

This article was originally published by Ben Dickson on TechTalks, a publication that examines trends in technology, how they affect the way we live and do business, and the problems they solve. But we also discuss the evil side of technology, the darker implications of new tech and what we need to look out for. You can read the original article here.

Published December 17, 2020 — 09:36 UTC

Click here to view a larger version in a new window

Click here to view a larger version in a new window

Carnegie Mellon University researchers discovered that by putting on special glasses, they could fool face-recognition algorithms into confusing them with celebrities (source: http://www.cs.cmu.edu).

Carnegie Mellon University researchers discovered that by putting on special glasses, they could fool face-recognition algorithms into confusing them with celebrities (source: http://www.cs.cmu.edu). The triggerless backdoor technique uses layers of dropout to install malicious behavior in the weights of the neural network

The triggerless backdoor technique uses layers of dropout to install malicious behavior in the weights of the neural network Credit: Depositphotos

Credit: Depositphotos

Nadine Shah makes so little money streaming that she struggles to pay her rent. CJS Media / Shutterstock

Nadine Shah makes so little money streaming that she struggles to pay her rent. CJS Media / Shutterstock

Each layer of the neural network will extract specific features from the input image.

Each layer of the neural network will extract specific features from the input image. AI researchers discovered that by adding small black and white stickers to stop signs, they could make them invisible to computer vision algorithms (Source: arxiv.org)

AI researchers discovered that by adding small black and white stickers to stop signs, they could make them invisible to computer vision algorithms (Source: arxiv.org) Research shows that neural networks with higher BrainScores are more robust to white-box adversarial attacks.

Research shows that neural networks with higher BrainScores are more robust to white-box adversarial attacks. The VOneBlock is a neural network architecture that mimics the functions of the primary visual cortex

The VOneBlock is a neural network architecture that mimics the functions of the primary visual cortex Experiments show that convolutional neural networks that have been modified to include the VOneBlock are more resilient against white-box adversarial attacks.

Experiments show that convolutional neural networks that have been modified to include the VOneBlock are more resilient against white-box adversarial attacks.