Looking at this nap duration model, Beta 0 is the interceptis the value that the target takes when all features are zero.

The rest of the betas are unknown Coefficients Together with the intercept, these are the missing parts of the model. You can observe the result of combining the different functions, but you do not know all the details about how each function affects the goal.

Once you have determined the value for each coefficient, you know the direction, either positive or negative, and the strength of the effect each characteristic has on the goal.

In a linear model, you assume that all functions are independent of one another. For example, the fact that you received a delivery doesn’t affect how many treats your dog receives in a day.

In addition, you think there is a linear relationship between the features and the goal.

The days when you can play more with your dog, they get more tired and want to nap longer. Or on days when there are no squirrels outside of your dog, your dog won’t need to nap as much because he hasn’t used as much energy to stay vigilant and keep an eye on every move the squirrels make.

How long will your dog take a nap tomorrow?

With the general idea of the model in mind, you have been collecting data for a few days. Now you have real observations of your model’s features and purpose.

Observations of traits and goals for the duration of your dog’s nap worth a few days.

However, there are still some critical parts missing, the coefficient values and the intercept.

One of the most popular methods for finding the coefficients of a linear model is Ordinary Least Squares.

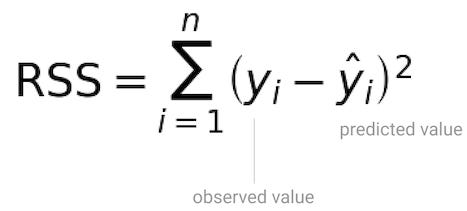

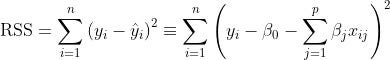

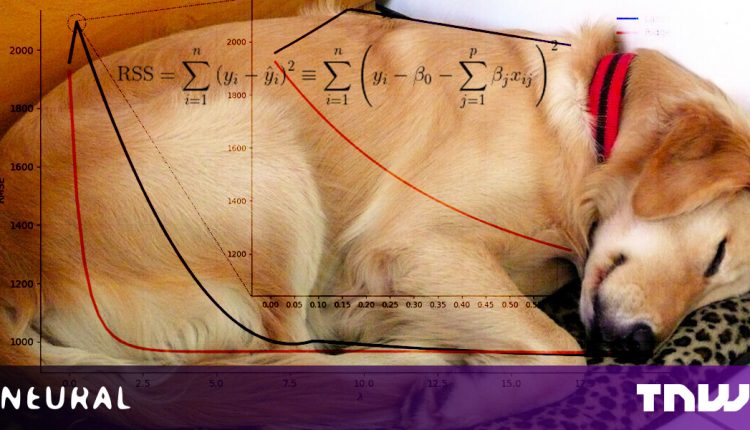

The requirement for Ordinary Least Squares (OLS) is that you choose the coefficients that minimize them Remaining sum of squaresthat is, the total squared difference between your predictions and the observed data[1].

With the remainder of the squares, not all residuals are treated equally. You want to give an example of the times when the model generated predictions that are too far from the observed values.

The point is not so much that the prediction is too far off, above or below the observed value, but the size of the error. You’re squaring the residuals and penalizing the predictions that are too far away while making sure you’re only working with positive values.

The remaining sum of the squares is not so much about the prediction being too far above or below the observed value, but the size of that error.

That way, when RSS is zero, it means that prediction and observed values are the same, and it’s not just the by-product of arithmetic.

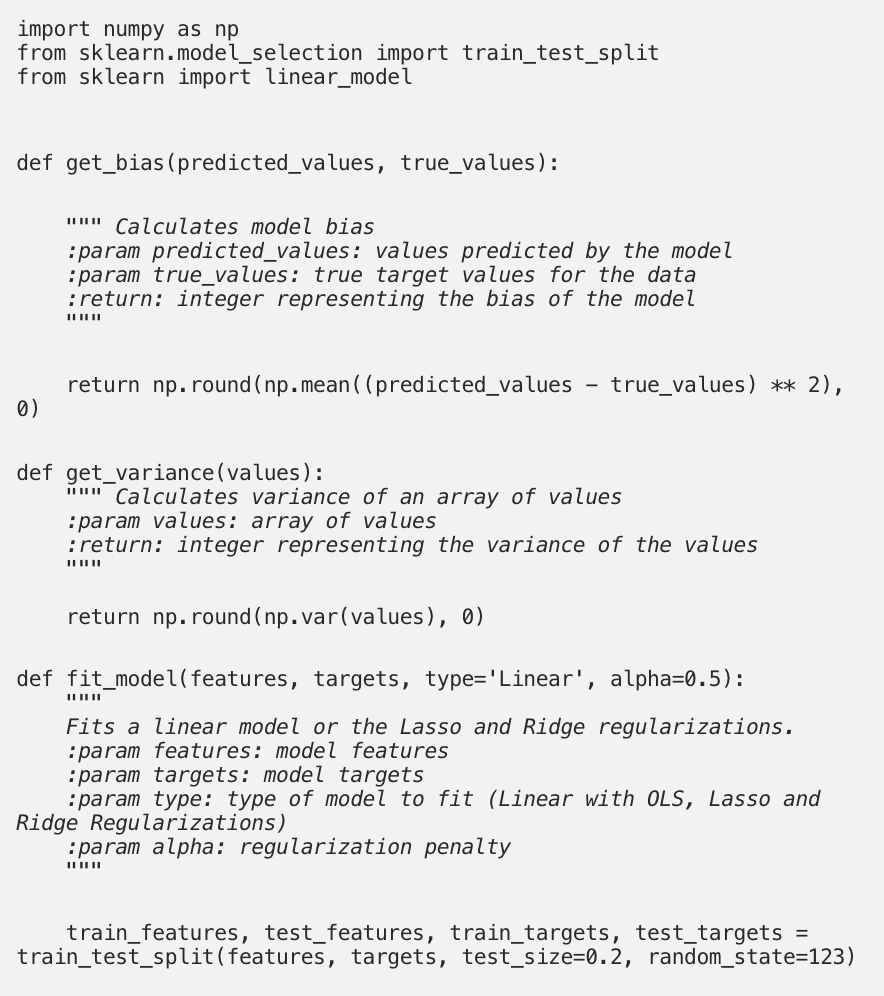

In Python you can use ScikitLearn Fit a linear model to the data using ordinary least squares.

Because you want to test the model on data that it was not trained on, you want to save a percentage of your original data set in a test set. In this case, the test data set happens to set aside 20% of the original data set.

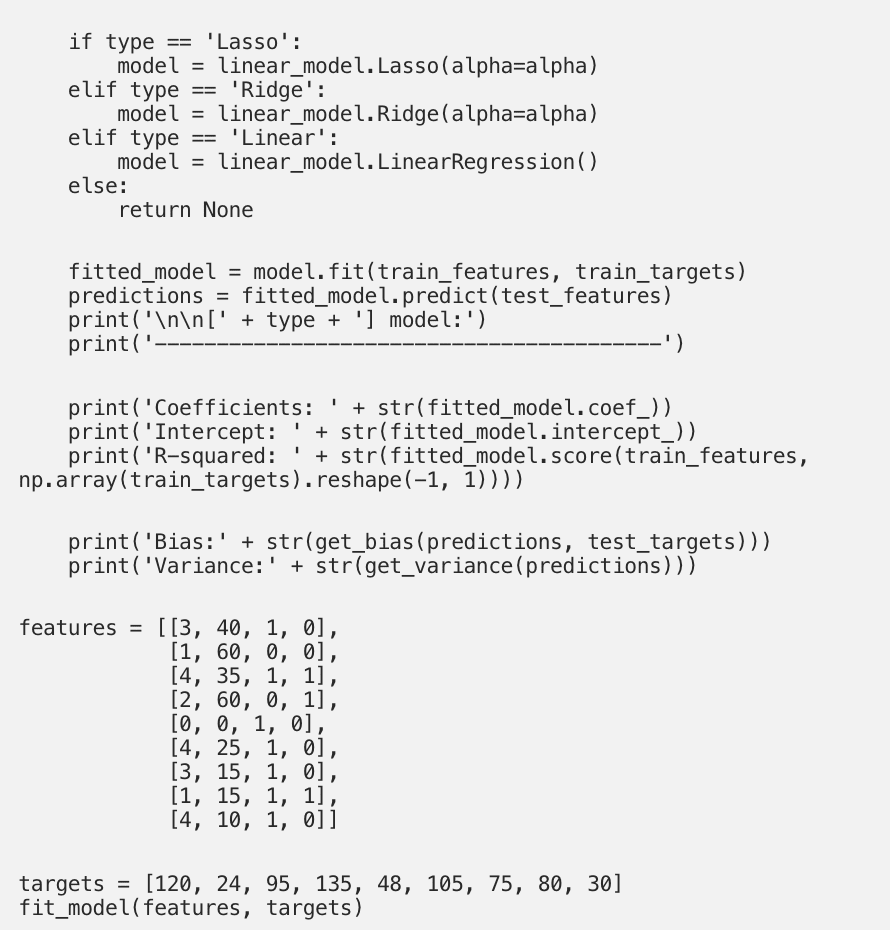

After you fit a linear model to the training set, you can review its properties.

The coefficients and intercept are the last parts you need to define your model and make predictions. The coefficients in the output array follow the order of the features in the dataset, so your model can be written as follows:

![]()

It is also useful to calculate some metrics to assess the quality of the model.

The R-square, also known as the coefficient of determination, gives an impression of how well the model describes the patterns in the training data and has values in the range from 0 to 1. It shows how strongly the variability in the target is explained by the functions[1].

For example, if you fit a linear model to the data but there is no linear relationship between the target and features, the R-square will be very close to zero.

Bias and variance are metrics that help balance the two sources of error in a model:

- The bias relates to the training error, that is, the error from predictions on the training set.

- The variance relates to the generalization error, the error from predictions on the test set.

This linear model has a relatively high variance. Let’s use regularization to reduce the variance while trying to keep the distortion as low as possible.

Model regulation

Regularization is a set of techniques that improve a linear model in terms of:

- Predictive accuracy by reducing the variance of the model’s predictions.

- Interpretability, by shrinkage or reducing the coefficients that are not so relevant to the model to zero[2].

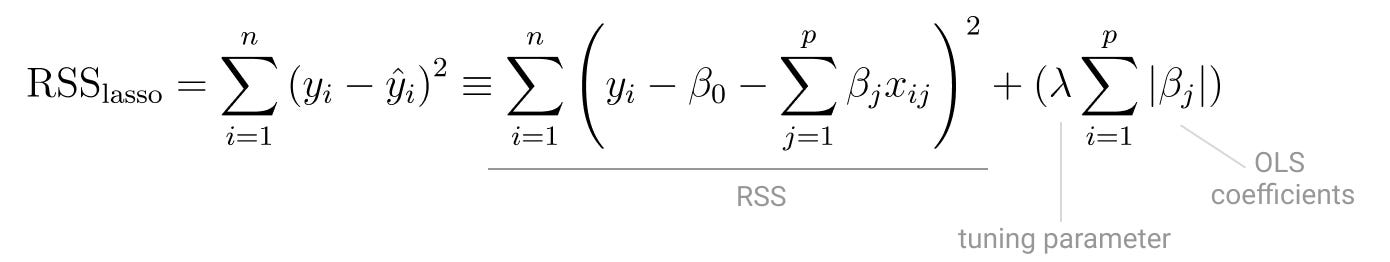

With ordinary least squares, you want to minimize the remaining sum of squares (RSS).

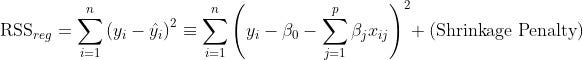

But in a regulated version of Ordinary Least Squares you want shrink some of its coefficients to reduce the overall model variance. You do this by applying a penalty to the remaining square sum[1].

By doing regulated In the OLS version, try to find the minimizing coefficients:

The Shrinkage penalty is the product of a tuning parameter and regression coefficients, so it becomes smaller as the regression coefficient part of the penalty becomes smaller. The tuning parameter controls the effect of the Shrinkage penalty in the remainder of the squares.

The Shrinkage penalty is never applied to beta 0, the intercept, because you only want to control the effect of the coefficients on the features and there is no feature associated with the intercept. If all the features have a coefficient of zero, then the goal is the value of the section.

There are two different regularization techniques that can be applied to OLS:

Ridge regression

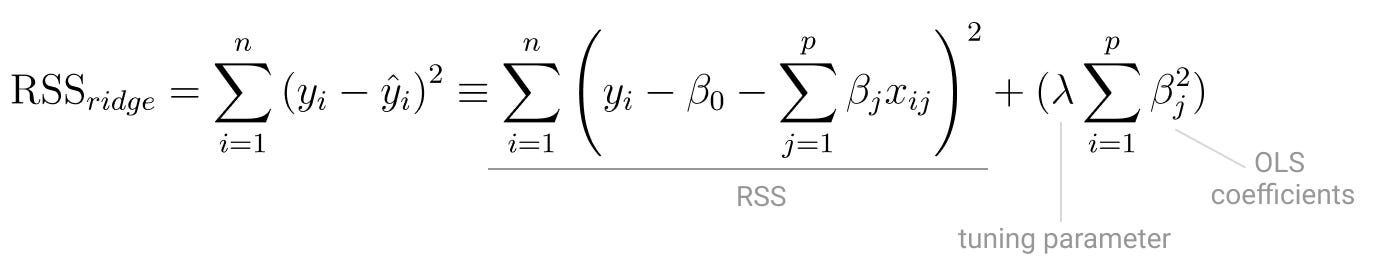

Ridge regression minimizes the sum of the square of the coefficients.

It is also called the L2 norm because it is used as a tuning parameter Lambda increases the norm of the least squares vector coefficients will always decrease.

Although it shrinks Every model coefficient in the same proportion, ridge regression actually never will shrink them to zero.

The aspect that makes this regularization more stable is also one of its drawbacks. In the end, you reduce the model variance, but the model retains its original level of complexity because none of the coefficients have been reduced to zero.

You can fit a model with ridge regression by running the following code.

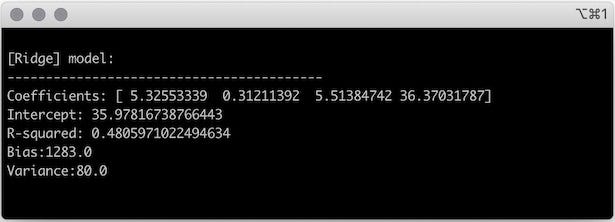

fit_model (features, goals, type = “Ridge”)

Here lambda, i.e. alpha in the The Scikit learning method was arbitrarily set to 0.5, but in the next section you will go through the process of tweaking this parameter.

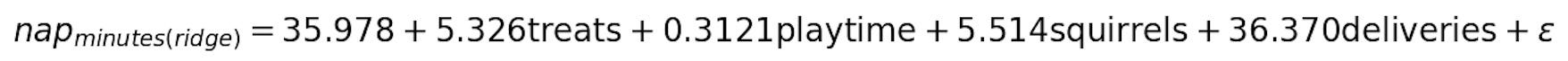

Based on the output of the ridge regression, your dog’s nap duration can be modeled as follows:

If you look at other features of the model such as R-square, bias, and variance, you can see that they have all been reduced compared to the output from OLS.

The ridge regression was very effective for shrinkage The value of the coefficients, and consequently the variance of the model, was significantly reduced.

However, the complexity and interpretability of the model remained the same. You still have four functions that will affect the length of your dog’s daily nap.

Let’s turn to Lasso and see how it works.

lasso

Lasso is short for Least absolute shrinkage and selection operator [2]and it minimizes the sum of the absolute values of the coefficients.

It is very similar to Ridge regression but uses the L1 norm as part of the instead of the L2 norm Shrinkage penalty. This is why Lasso is also known as L1 regularization.

The great thing about Lasso is that it actually will be shrink Some of the coefficients are set to zero, which reduces both the variance and the complexity of the model.

Lasso uses a technique called soft-thresholding[1]. It shrinks each coefficient by a constant amount, so if the coefficient value is lower than that Shrinkage constant it’s reduced to zero.

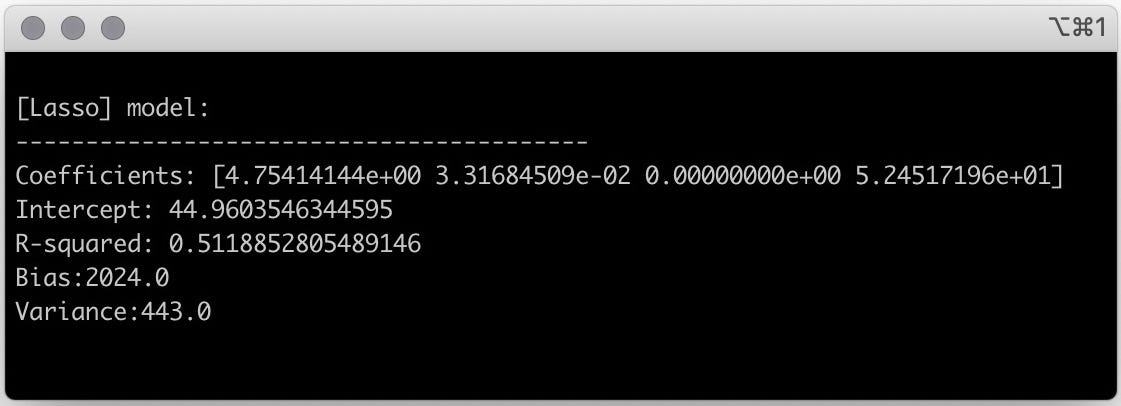

Again with any Lambda from 0.5 you can adjust lasso to the data.

In this case, you can see that the trait was squirrel removed from the model because its coefficient is zero.

With Lasso, your dog’s nap duration can be described as a model with three characteristics:

![]()

The advantage here over ridge regression is that you have obtained a model that is easier to interpret because it has fewer functions.

Switching from four to three functions isn’t a big deal in terms of interpretability, but you can see how useful it can be in datasets with hundreds of functions!

Find your optimal lambda

As far as the Lambda You’ve seen ridge regression and lasso in action was completely arbitrary. But there is a way to optimize the value of Lambda to ensure that you can reduce the overall model variance.

If you have the root mean square error versus a continuous set of Lambda You can use the values Elbow Technique to find the optimal value.

Comments are closed.