Im Laufe der Jahre haben wir Dutzende von 3D-Druckern getestet, sodass wir eine gute Vorstellung davon haben, was gut ist und was nicht. Aber trotz jahrelanger Behauptungen über großartige billige 3D-Drucker gibt es nicht allzu viele, die unseren hohen Standards für Qualitätsdrucke entsprechen.

Der beste da draußen ist immer noch ein Formlabs-Drucker. Das Form 2 war ein hervorragender Drucker, aber das neu veröffentlichte Form 3 ist noch besser. Es hat eine etwas größere Baufläche und die Drucke, die aus dieser Maschine kommen, sind noch glatter und präziser als die des Vorgängers, was an und für sich beeindruckend ist. Aber es sind immer noch 3.500 US-Dollar, außerhalb der Reichweite der meisten von uns. Es gibt jedoch eine Menge günstigerer Optionen. Wenn Sie also auf dem Markt nach etwas Budgetfreundlicherem suchen, lesen Sie weiter.

Lesen Sie weiter unten in diesem Beitrag einige großartige Angebote für 3D-Drucker, die wir kontinuierlich mit den besten aktuellen Angeboten aktualisieren.

Formlabs Form 3

Bill Roberson / Digitale Trends

Das Form 3 von Formlabs ist keineswegs billig; Weder war sein Vorgänger, das Formular 2. Aber Sie bekommen, wofür Sie bezahlen, oder? Keiner der übrigen Drucker auf dieser Liste hat eine annähernd qualitativ hochwertige Qualität. Und überraschenderweise ist es super einfach zu bedienen.

Es ist erwähnenswert, dass das Formular 3 kein durchschnittlicher FDM-Drucker ist. Es ist eine ganz andere Rasse. Anstatt Plastikfilamente zu erhitzen und durch eine Düse zu spritzen, um Objekte Schicht für Schicht aufzubauen, verwendet das Form 3 ein Laserprojektionssystem, um Objekte aus einem Pool aus UV-härtbarem Harz zu „wachsen“. Wenn der Laser über die Harzschale blitzt, verfestigt sich eine dünne Schicht auf der Bauplatte, die für jede neue Schicht langsam nach oben gezogen wird.

Nun ist klar, dass Form 3 nicht der einzige 3D-Drucker ist, der diese Methode verwendet – aber es ist die einfachste Verwendung des Bündels. Formlabs füllte es mit einer Schiffsladung großartiger Funktionen, die das Drucken auf Harzbasis weniger problematisch machen, wie z. B. eine automatisch füllende Harzschale, und einer ausgeklügelten Druckfunktion, mit der Objekte leichter von der Bauplatte entfernt werden können. Es gibt sogar eine Web-App, mit der Sie den Status Ihres Ausdrucks überprüfen können, wenn Sie sich nicht in der Nähe des Geräts befinden.

Lulzbot Mini 2

Es gibt viele Drucker auf dem Markt, die sich an Anfänger richten, aber nur sehr wenige machen das richtig. Eine Sache, die bei „anfängerfreundlichen“ Druckern immer häufiger vorkommt, ist, die verfügbaren Optionen zugunsten einer verwässerten, stark vereinfachten Benutzeroberfläche zu entfernen. Oft haben Sie nur drei verschiedene Auflösungsoptionen (hoch, mittel oder niedrig) und keinen Zugriff oder keine Kontrolle über „verwirrende“ Einstellungen wie Extrusionsgeschwindigkeit, Fülldichte oder Hot-End-Temperatur. Dieser fehlende Zugriff erleichtert die Bedienung des Geräts, schränkt jedoch auch die Möglichkeiten Ihres 3D-Druckers erheblich ein. Dies ist der träge Weg, einen Drucker „benutzerfreundlich“ zu machen.

Lulzbot geht diesen faulen Ansatz nicht an. Anstatt erweiterte Optionen zu entfernen, können Sie mit der Software von Lulzbot Ihre Komplexität und Kontrolle auswählen. Es gibt eine Basisoberfläche, in der Sie nur einfache Einstellungen (Temperatur, Geschwindigkeit, Ebenenhöhe usw.) vornehmen müssen, aber auch eine Registerkarte “Erweitert”, mit der Sie die Druckparameter genauer steuern können. Diese Funktionen eignen sich hervorragend für Anfänger, da sie einfach und unkompliziert sind, wenn Sie noch etwas lernen, aber Sie nicht daran hindern, tiefer zu gehen, Ihr Wissen zu erweitern und mehr aus Ihrem 3D-Drucker herauszuholen.

Der Lulzbot Mini 2 bietet gegenüber seinem Nachfolger einige neue Hauptfunktionen. Äußerlich sieht es genauso aus, aber innen liegen große Veränderungen. Die Druckgeschwindigkeit ist viel schneller und dieser Drucker unterstützt jetzt dank eines brandneuen Druckkopfs eine Vielzahl von Materialien. Mit dem Original haben wir bereits einige Drucke von ziemlich guter Qualität erhalten, daher sollte der neue Drucker theoretisch noch besser sein. Und Sie können diesen Drucker aufrüsten, anstatt daraus zu wachsen, was Sie bei vielen günstigeren Wettbewerbern tun werden.

Monoprice Mini Delta

Bill Roberson / Digitale Trends

Soweit wir das beurteilen können, Monoprice Mini Delta ist einer der billigsten 3D-Drucker, die Sie kaufen können. Das ist kein Kit – aber das ist nicht der einzige Grund, warum wir ihn ausgewählt haben. Dieses Gerät ist nicht nur fertig montiert und sofort einsatzbereit, sondern verfügt auch über ein beheiztes Bett. Wir glauben, dass dies ein entscheidendes Merkmal für FDM-Drucker ist. Dies verhindert Verwerfungen und reduziert das Risiko von Druckfehlern drastisch – und ist normalerweise nur bei Druckern zu finden, die mehr als 1.000 US-Dollar kosten. Dieser kostet 180 Dollar, was umwerfend ist.

Der Mini Delta verfügt außerdem über ein Hot End mit variabler Temperatur, mit dem Sie Einstellungen anpassen und mit einer Vielzahl von Materialien drucken können. Dies umfasst Grundfilamente wie ABS und PLA sowie fortschrittlichere Materialien wie leitfähiges PLA, Holz- und Metallverbundwerkstoffe oder lösliches PVA. Darüber hinaus ist das beheizte Bett selbstnivellierend, sodass Sie das Gerät niemals manuell kalibrieren müssen, bevor Sie einen Druckvorgang starten.

Monoprice gibt Ihnen hier sicherlich viel Geld, aber das Mini Delta ist nicht perfekt. Anfänger sollten mit einer steilen Lernkurve rechnen und bereit sein, ab und zu Fehler zu beheben. Nur weil es billig ist, heißt das nicht, dass es gut für Anfänger ist.

Lesen Sie unseren vollständigen Monoprice Mini Delta Test

Anycubic Photon

Bis vor kurzem waren Stereolithographiedrucker auf Verbraucherebene im Wesentlichen die Einhörner der 3D-Druckwelt. Jahrelang gab es nur wenige davon, die an Verbraucher verkauft wurden, und sie waren für die meisten 3D-Druck-Enthusiasten in der Regel viel zu teuer, um sie sich leisten zu können. Das Anycubic Photon ändert das und ist jetzt fast so billig wie einige Low-End-Drucker.

Dieser Drucker, der bei den meisten Einzelhändlern weniger als 250 US-Dollar kostet, ist in der Lage, wahnsinnig detailreiche Objekte herzustellen. Dies ist vor allem dem SLA / DLP-Druckverfahren zu verdanken, mit dem das Gerät mit extrem dünnen Schichten drucken kann. Wir sprechen von weniger als einem Zehntel der Breite eines menschlichen Haares. Machen Sie keinen Fehler – die Drucke des Photon sind noch detaillierter als die des hochwertigsten FDM-Druckers, den wir je getestet haben.

Seien Sie jedoch gewarnt. Dieses Detail hat seinen Preis. Leider hat das Photon auch einen unglaublich kleinen Umschlag, so dass Sie nichts überdrucken können 115 × 65 × 155 Millimeter (4,5 × 2,5 × 6,1 Zoll). Es ist auch eine Qual, mit dem klebrigen, stinkenden und leicht giftigen Harz des Druckers zu arbeiten. Sie müssen Handschuhe tragen, um ein Berühren zu vermeiden, und die fertigen Teile in Isopropylalkohol einweichen, um ungehärtete Gänsehaut zu entfernen. Es ist bei weitem nicht so bequem oder wartungsarm wie ein durchschnittlicher FDM-Drucker.

Das Photon ist zwar ein preisgünstiges Tier in Bezug auf die Druckleistung, aber nicht jedermanns Sache.

Lesen Sie unseren vollständigen Anycubic Photon-Test

Monoprice Maker Select Plus

Bis Monoprice in die 3D-Druck-Arena vordrang, war es schwierig, einen Drucker unter 1.000 US-Dollar zu finden, der eine große Baufläche, ein beheiztes Bett, einen stabilen Rahmen und einen Extruder mit vielen verschiedenen Materialien hatte. Ehrlich gesagt fast unmöglich. Jetzt gibt es viele Optionen in dieser Preisklasse, und die MP Maker Select Plus ist wohl das Beste vom ganzen Haufen – es sei denn, Sie sind bereit, ein Kit zu kaufen und den Drucker selbst zu bauen.

Die meisten Drucker in der Preisklasse unter 500 US-Dollar haben Bauflächen, die in Länge / Breite / Höhe nicht größer als 6 Zoll sind. Der MSP verfügt jedoch über eine große Bauform von 7,9 × 7.9 × 7,1 Zoll, was nichts zu verspotten ist. Dies bedeutet nicht nur, dass Sie größere Teile drucken können, sondern Sie können auch mehr kleine Teile auf die Bauplatte montieren, was die Produktionszeiten verkürzt.

Diese Bauplatte wird ebenfalls erwärmt, wodurch verhindert wird, dass das extrudierte Filament abkühlt, sich zusammenzieht und die Form Ihres Druckobjekts verzieht. Diese Funktion ist von entscheidender Bedeutung (insbesondere, wenn Sie mit ABS drucken), verringert die Wahrscheinlichkeit eines Druckfehlers drastisch und macht das Drucken mit einem Floß überflüssig, das zusätzliche Filamente verbraucht.

Lesen Sie unseren vollständigen Monoprice Maker Select Plus-Test

Heutige Angebote für 3D-Drucker

Während einige dieser Drucker möglicherweise nicht in unsere Liste aufgenommen wurden, haben wir nachfolgend eine Liste der guten Angebote für 3D-Drucker zusammengestellt, die derzeit laufen.

Wie wir testen

Um die verschiedenen 3D-Drucker zu testen, die wir zur Überprüfung erhalten, drucken wir einen sorgfältig ausgewählten Satz von Objekten. Diese Suite enthält 3DBenchy, ctrlV v3, einen Warp-Test und einige andere. Zusammen haben diese Objekte fast alles, mit dem Drucker im Allgemeinen zu kämpfen haben: Oberflächen mit geringer Neigung, Überhänge, nicht unterstützte Bereiche, feine Details und vieles mehr. Wenn ein Drucker in etwas nicht gut ist, werden diese Formen es hervorheben.

Sie können sich nie sicher sein, deshalb testen wir den Drucker noch mindestens ein halbes Dutzend Mal. Wir drucken zufällige Dokumente und Bilder, die verschiedene Mitarbeiter zu diesem Zeitpunkt benötigen, was den betreffenden Druckern einen gründlicheren Test bietet. Wenn wir dieselbe Aufgabe wiederholt zum Testen des Druckers verwendet haben, könnten Probleme mit anderen Aufgaben übersehen werden.

Wenn alles gesagt und getan ist, nehmen wir eine Handvoll unserer Testdrucke und machen ein paar hochauflösende Bilder, damit Sie selbst sehen können, wie sie sich herausstellten. Wir messen auch bestimmte Teile des Drucks mit einem Mikrometer, um festzustellen, wie genau das physikalische Modell im Vergleich zum digitalen Modell ist.

Aber natürlich sind Auflösung und Genauigkeit nicht alles. Nachdem wir mit dem Drucken fertig sind, bewerten wir auch den relativen Reparatur- und Aufrüstbarkeitsgrad der Maschine. Wir prüfen, ob Sie es leicht zerlegen und daran basteln können, wenn etwas schief geht. Wir fragen auch, ob Sie die Komponenten des Druckers aktualisieren können, wenn neuere, bessere verfügbar werden. Zuletzt stellen wir fest, ob es in einigen Jahren veraltet sein wird. Wir gehen unter die Haube und finden alles für Sie heraus.

Hilfreiche Begriffe zu wissen

- FDM: Modellierung der Filamentabscheidung. Auch als FFF- oder Fused Filament-Herstellung bekannt. Dies ist die gebräuchlichste Art des 3D-Drucks. Sie schmilzt ein thermoplastisches Filament, spritzt es durch eine Düse und legt es dann Schicht für Schicht ab, um ein Objekt zu bilden.

- SALAT: Abkürzung für Stereolithographie. Es handelt sich um einen 3D-Druckstil, bei dem mithilfe eines Laserprojektionssystems Objekte aus einem Pool aus UV-härtbarem Harz „gezüchtet“ werden.

- Heißes Ende: Die beheizte Düse, durch die Kunststofffilamente in einem FDM-Drucker extrudiert werden.

- Beheiztes Bett: Dies bezieht sich auf eine beheizte Bauplatte, die verhindert, dass die ersten Schichten aus extrudiertem Kunststoff abkühlen und sich verziehen. Wenn sich Ihr Projekt verzieht, führt dies häufig zu Druckfehlern.

- ABS: Es steht für Acylonitril-Butadien-Styrol. Ein Kunststoff auf Ölbasis, der üblicherweise als 3D-Druckfilament verwendet wird. Es ist ein starkes, robustes Material, das üblicherweise für die Konstruktion von Autoteilen aus Kunststoff, Musikinstrumenten und den beliebten Lego-Bausteinen verwendet wird. ABS hat einen hohen Schmelzpunkt und kann sich beim Abkühlen während des Druckens verziehen. Aus diesem Grund müssen ABS-Objekte auf eine beheizte Oberfläche gedruckt werden, was viele Drucker zu Hause nicht haben.

- PLA: Polymilchsäure wird aus organischem Material hergestellt – speziell Maisstärke und Zuckerrohr. Dies macht das Material einfacher und sicherer zu verwenden und verleiht ihm ein glatteres und glänzenderes Aussehen, das ästhetischer ist. Während PLA auf den ersten Blick als bessere Wahl erscheint, weist es einen weitaus niedrigeren Schmelzpunkt als ABS auf. Dies bedeutet, dass die Verwendung von PLA-gedruckten Teilen für mechanische Vorgänge oder sogar die Lagerung an Hochtemperaturorten dazu führen kann, dass sich das Teil verzieht, reißt oder schmilzt.

Empfehlungen der Redaktion

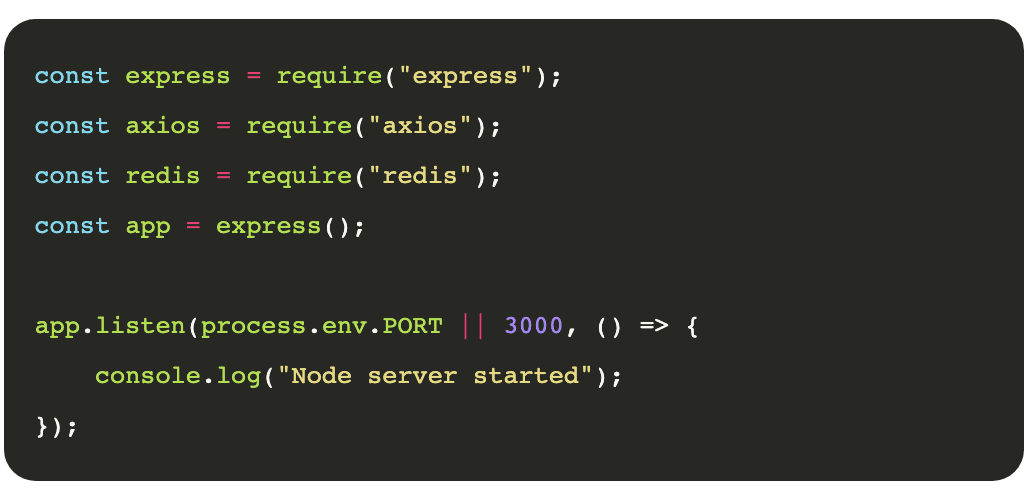

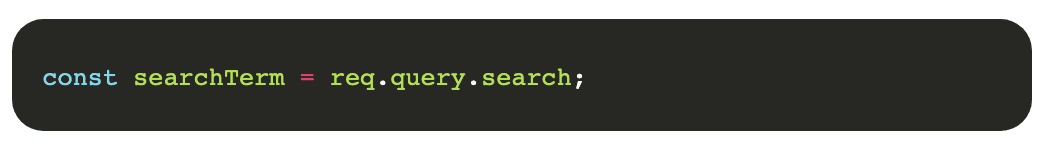

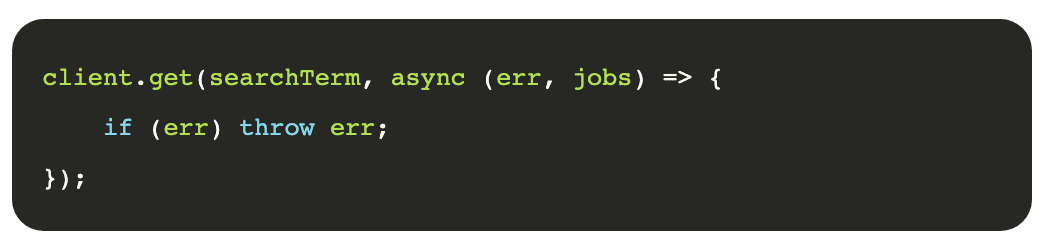

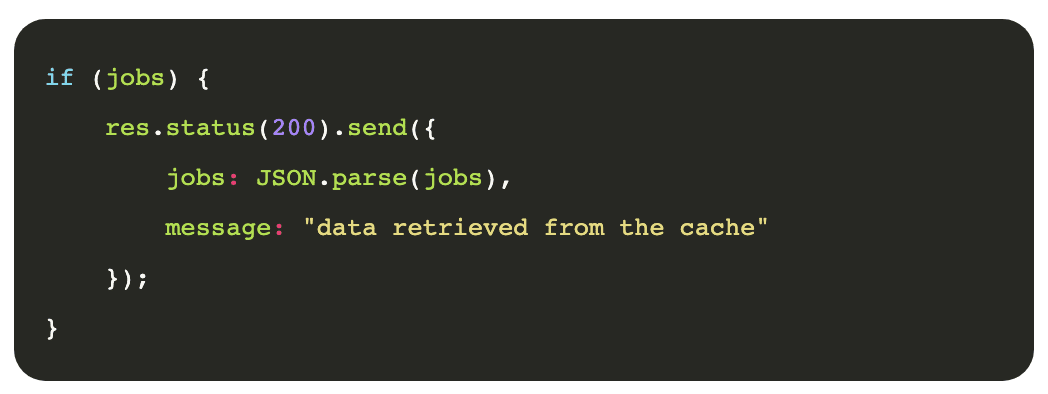

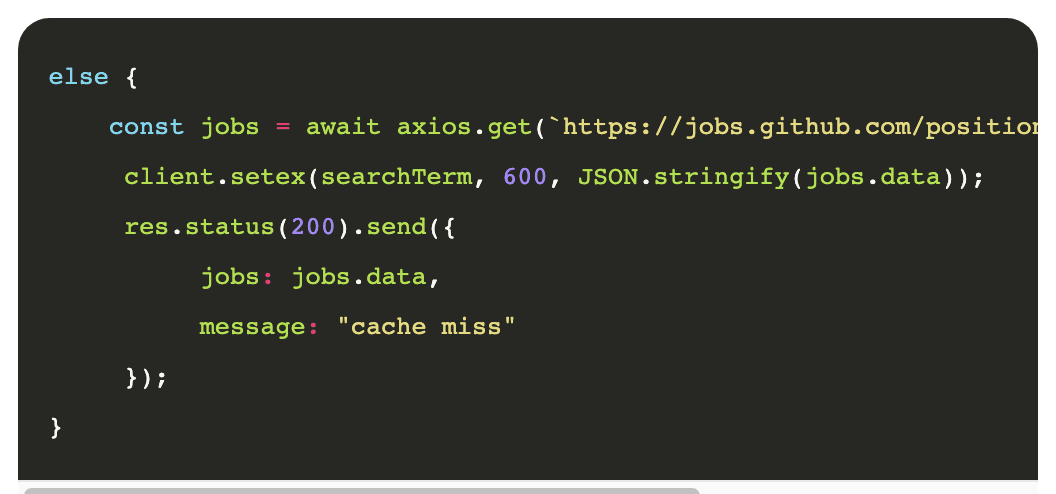

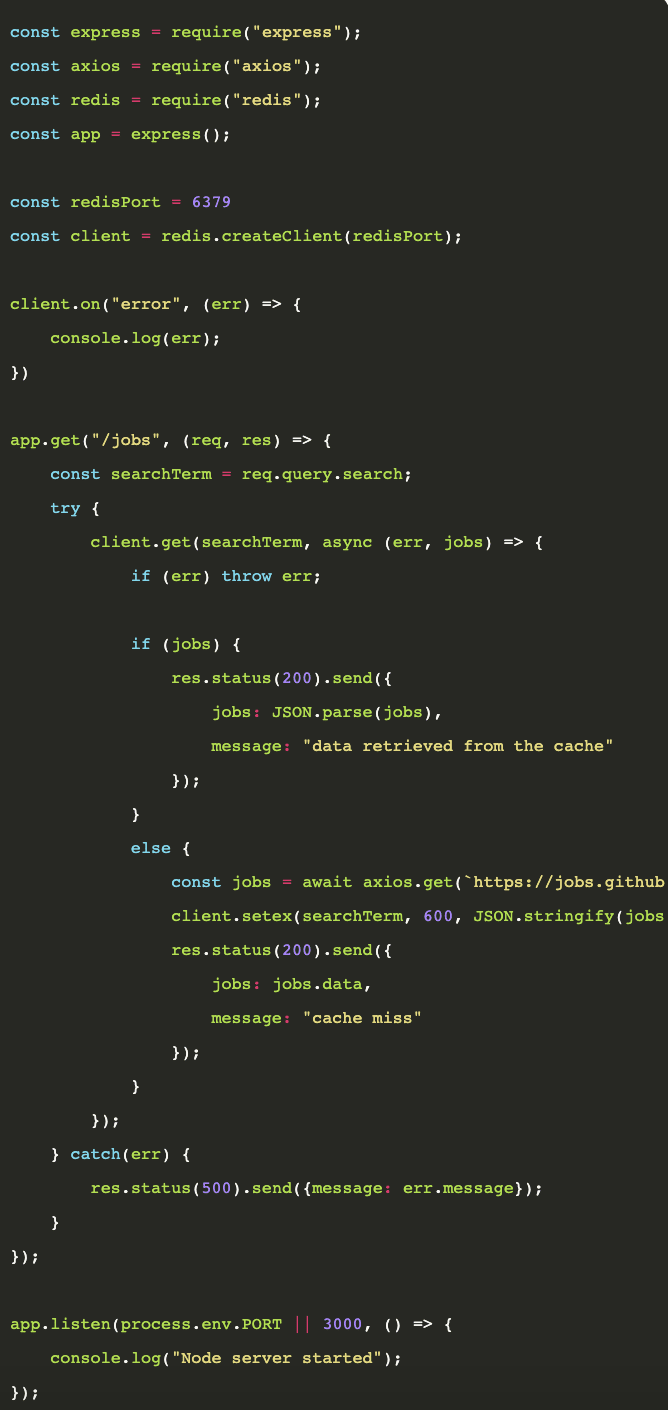

POSTMAN – GitHub Jobs API Results

POSTMAN – GitHub Jobs API Results

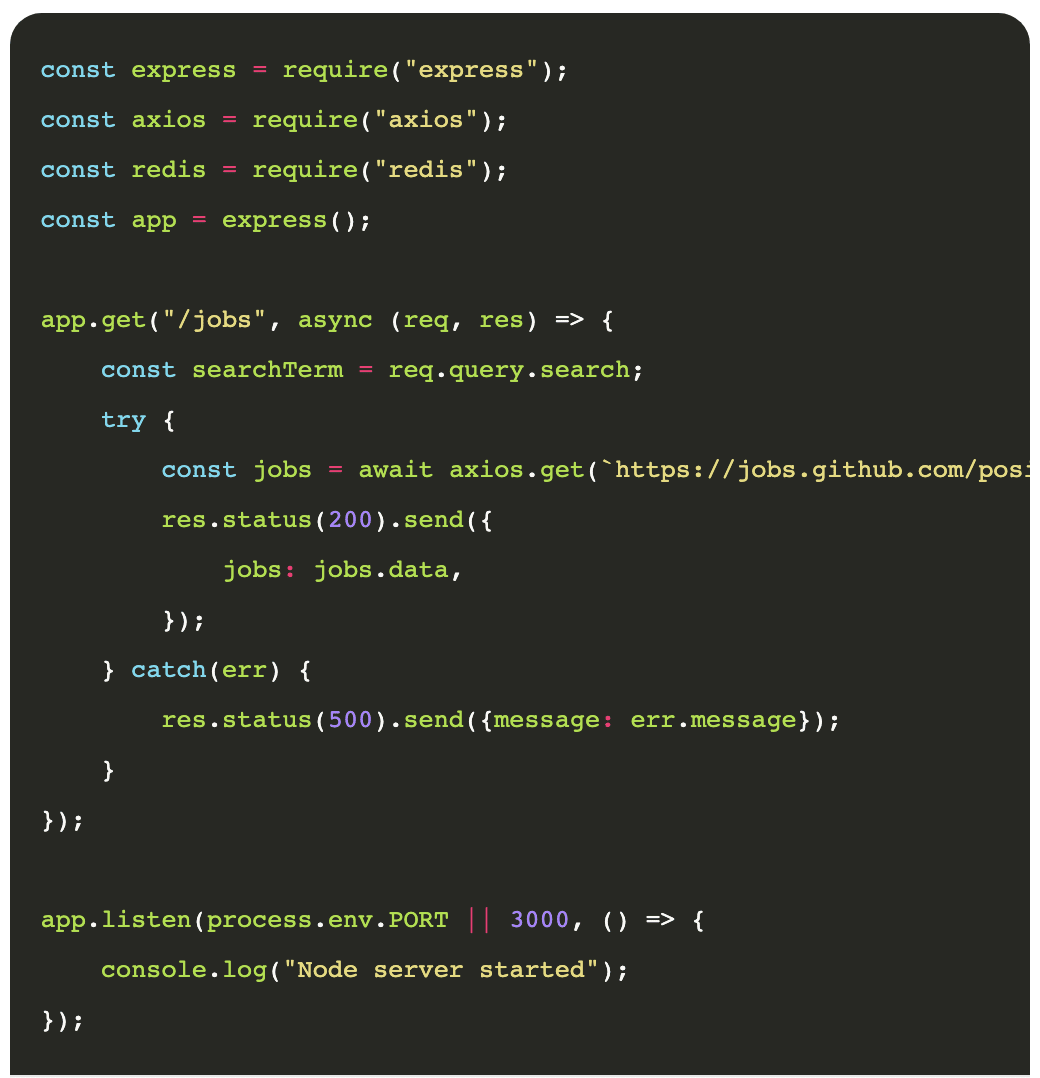

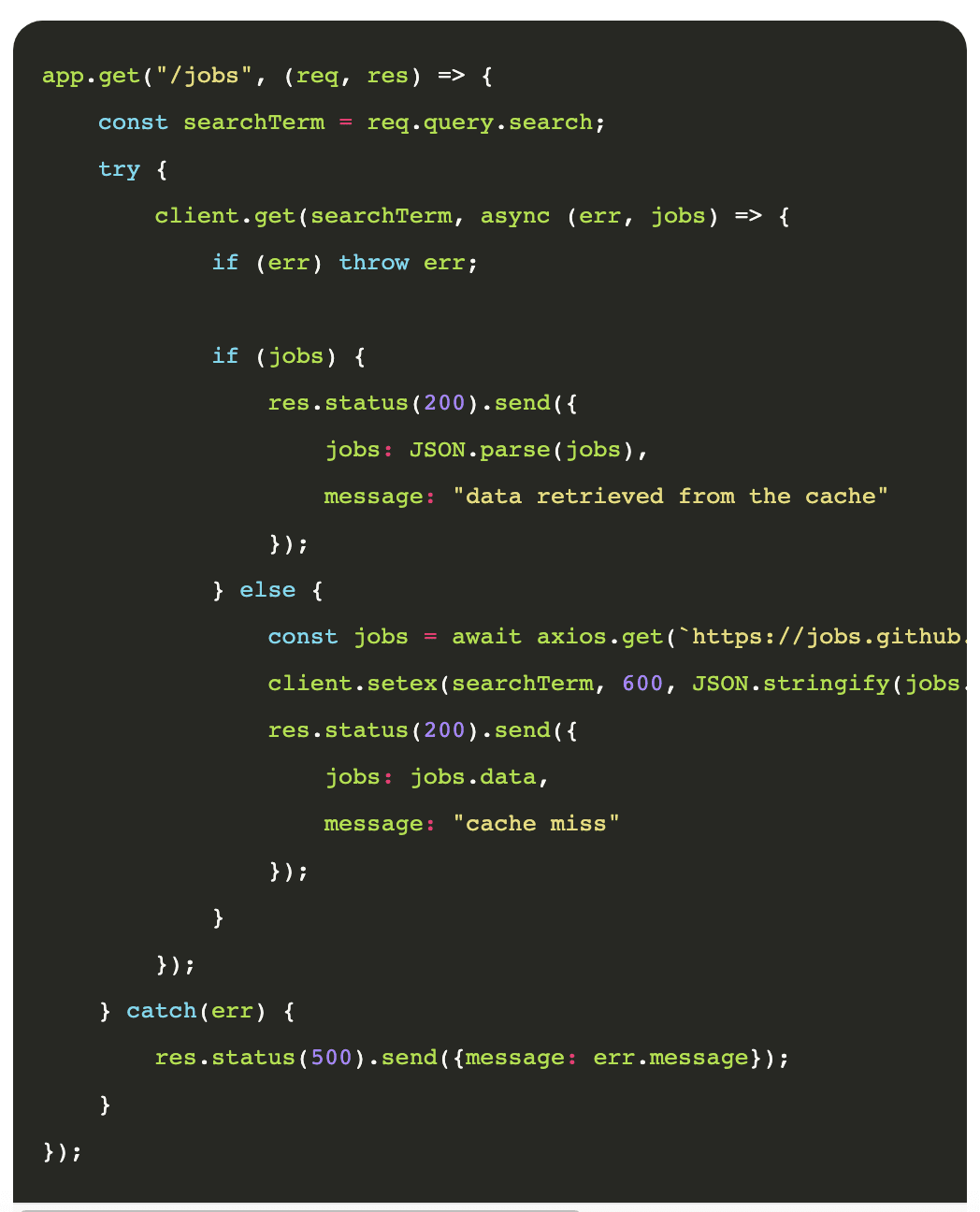

POSTMAN – Our API results

POSTMAN – Our API results

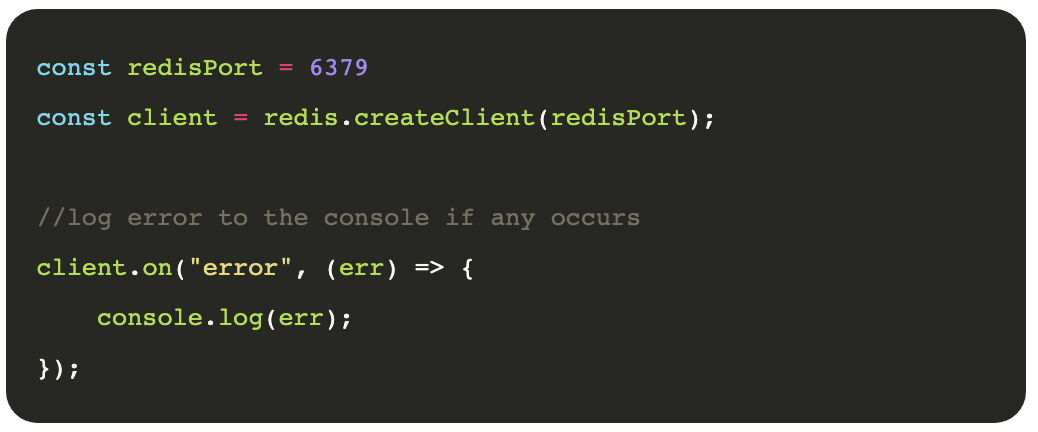

performance

performance